redo Jump to...

print Print...

Example of Media Bias:

(The following is excerpted from a May 10 article by Will Oremus, senior tech writer, Slate .com):

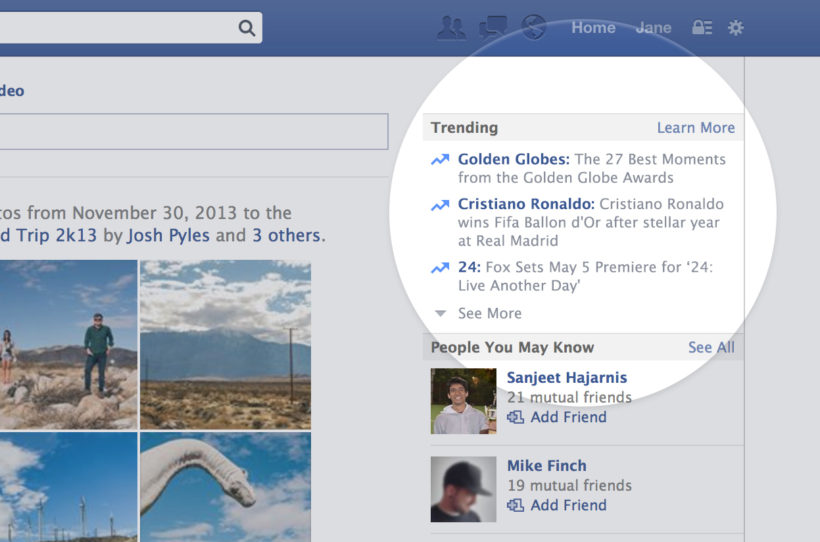

Facebook must have thought the online news game was pretty easy. Two years ago, it plucked a small team of about a dozen bright, hungry twentysomethings fresh out of journalism school or entry-level reporting jobs. It stuck them in a basement, paid them contractor wages, and put them to work selecting and briefly summarizing the day’s top news stories and linking to the news sites that covered them. It called them curators, not reporters. Their work appeared in the “Trending” section of the Facebook home page and mobile app, where it helped to define the day’s news for millions of Facebook users.

That is, by any reasonable definition, a form of journalism. And it made Facebook a de facto news organization.

But Facebook refused to acknowledge that….

On Tuesday, Facebook became the subject of a Senate inquiry over claims of anti-conservative bias in its Trending section. Senate Commerce Committee Chairman John Thune, a South Dakota Republican, sent Mark Zuckerberg a letter asking a series of pointed questions about how Facebook chooses stories for the section, how it trains its news “curators,” who’s responsible for their decisions, and what steps it’s taking to investigate the bias claims. He also asks for detailed records of stories that the company decided not to include in the Trending section despite their popularity among Facebook users.

The inquiry followed a report by Gizmodo’s Michael Nunez, in which anonymous former Facebook “curators” described the subjective process by which they assembled the Trending section. Facebook had publicly portrayed the section – which you can find near the top right on the Facebook website or under the search tab on the Facebook app – as an algorithmically driven reflection of the most popular stories its users are reading at any given time. But the ex-curators said they often filtered out stories that were deemed questionable and added others they deemed worthy [even if they were not trending]. One, a self-identified conservative, complained that this led to subtle yet pervasive liberal bias, since most of the curators were politically liberal themselves. Popular stories from conservative sites such as Breitbart, for instance, were allegedly omitted unless more mainstream publications such as the New York Times also picked them up.

None of this should come as a surprise to any thoughtful person who has worked as a journalist. Humans are biased. Objectivity is a myth, or at best an ideal that can be loosely approached through the very careful practice of trained professionals. The news simply is not neutral. Neither is “curation,” for that matter, in either the journalistic or artistic application of the term.

There are ways to grapple with this problem honestly :

- to attempt to identify and correct for one’s biases

- to scrupulously disclose them

- to employ an ideologically diverse staff

- perhaps even to reject objectivity as an ideal and embrace subjectivity

But you can’t begin to address the subjective nature of news without first acknowledging it. And Facebook has gone out of its way to avoid doing that, for reasons that are central to its identity as a technology company.

Here’s how Facebook answers the question “How does Facebook determine what topics are trending?” on its own help page:

Trending shows you topics that have recently become popular on Facebook. The topics you see are based on a number of factors including engagement, timeliness, Pages you’ve liked and your location.

No mention of humans or subjectivity there. Similarly, Facebook told the tech blog Recode in 2015 that the Trending section was algorithmic, i.e., that the stories were selected automatically by a computer program:

Once a topic is identified as trending, it’s approved by an actual human being, who also writes a short description for the story. These people don’t get to pick what Facebook adds to the trending section. That’s done automatically by the algorithm. They just get to pick the headline.

Bias in the selection of stories and sources? Impossible. It’s all done “automatically,” by “the algorithm”! Which is as good as saying “by magic,” for all it reveals about the process.

It’s not hard to fathom why Facebook is so determined to portray itself as objective. With more than 1.6 billion active users, it’s larger than any political party or movement in the world. And its wildly profitable $340 billion business is predicated on its near-universal appeal. You don’t get that big by taking sides.

For that matter, you don’t get that big by admitting that you’re a media company. As the New York Times’ John Herrman and Mike Isaac point out, 65 percent of Americans surveyed by Pew view the news media as a “negative influence on the country.” For technology companies, that number is just 17 percent.

It’s very much in Facebook’s interest to remain a social network in the public’s eyes, even in the face of mounting evidence that it’s something much bigger than that. And it’s in Facebook’s interest to shift responsibility for controversial decisions from humans, whom we know to be biased, to algorithms, which we tend to lionize.

…The algorithm that surfaces the stories might skirt questions of bias by simply ranking them in order of popularity, thus delegating responsibility for story selection from Facebook’s employees to its users. Even that – the notion that what’s popular is worth highlighting – represents a human value judgment, albeit one that’s not particularly vulnerable to accusations of political bias. (That’s why Twitter isn’t in the same hot water over its own simpler trending topics module.) …..

Facebook can and will dispute the specifics of these claims, as the company’s vice president of search, Tom Stocky, did in a Facebook post Tuesday morning. But they’re missing the point entirely. Facebook’s problem is not that its “curators” are biased. Facebook’s problem is that it refuses to admit that they’re biased. …..

(The above is excerpted from a May 10 article by Will Oremus, senior tech writer, Slate .com)

To accurately identify different types of bias, you should be aware of the issues of the day, and the liberal and conservative perspectives on each issue.

Types of Media Bias:Questions

1. What types of bias does the Gizmodo article expose about Facebook’s “trending news” section?

2. Jillian Kay Melchior wrote at the New York Post on May 11:

Such allegations are especially disturbing given Facebook’s outsized role in news distribution. With 1.65 billion active monthly users as of May 1, its audience is enormous. A recent Pew study, looking at news consumption on smartphones, discovered that Facebook sends more readers to news sites than any other social-media platform.

Facebook also has exceptional potential to influence the politics of millennials, a group that just surpassed baby boomers as America’s biggest generation. Sixty-one percent of adults under 34 consume political news from Facebook, according to Pew.

Narrowing their exposure to diverse opinions and perspectives changes the way they view the world.

Do you agree with this assertion? Explain your answer.

3. Would this information about Facebook affect your use of its “trending news” section? Explain your answer.

(Read another analysis on the Facebook story by Farhad Manjoo at The New York Times: “Facebook’s Bias Is Built-In, and Bears Watching”)

Scroll down to the bottom of the page for the answers.

Answers

1. Facebook displays bias by omission, story selection and spin (claiming it uses computer algorithms to list the “trending” news stories).

2. Opinion question. Answers vary.

3. Opinion question. Answers vary.